Amazon and Anthropic have significantly expanded their strategic partnership, combining massive infrastructure commitments with fresh capital investment to accelerate the development and deployment of advanced artificial intelligence systems.

Under the new agreement, Anthropic will commit more than $100 billion over the next decade to Amazon Web Services (AWS), leveraging current and future generations of Amazon’s custom AI chips, including Trainium and Graviton. The deal also secures up to 5 gigawatts (GW) of compute capacity to train and power Anthropic’s next-generation AI models, marking one of the largest infrastructure commitments in the AI sector to date.

As part of the expanded collaboration, Amazon will invest $5 billion in Anthropic immediately, with the potential to invest up to an additional $20 billion tied to future commercial milestones. This builds on the $8 billion Amazon has already invested in the company since 2023.

The partnership underscores the growing demand for scalable AI infrastructure, as more than 100,000 customers currently run Anthropic’s Claude models on AWS. The models are among the most widely used within Amazon Bedrock, Amazon’s managed service for deploying generative AI applications.

A key component of the collaboration is Project Rainier, described as one of the world’s largest AI compute clusters, where both companies are pushing the limits of large-scale AI training and deployment. The expanded agreement also includes global infrastructure growth, particularly in Asia and Europe, to support rising demand for AI inference workloads.

Anthropic will utilise multiple generations of Trainium chips, including Trainium2, Trainium3, Trainium4, and future iterations, alongside tens of millions of Graviton cores to optimise performance and cost efficiency. The company is already deploying these technologies to scale its generative AI offerings while maintaining security and operational efficiency.

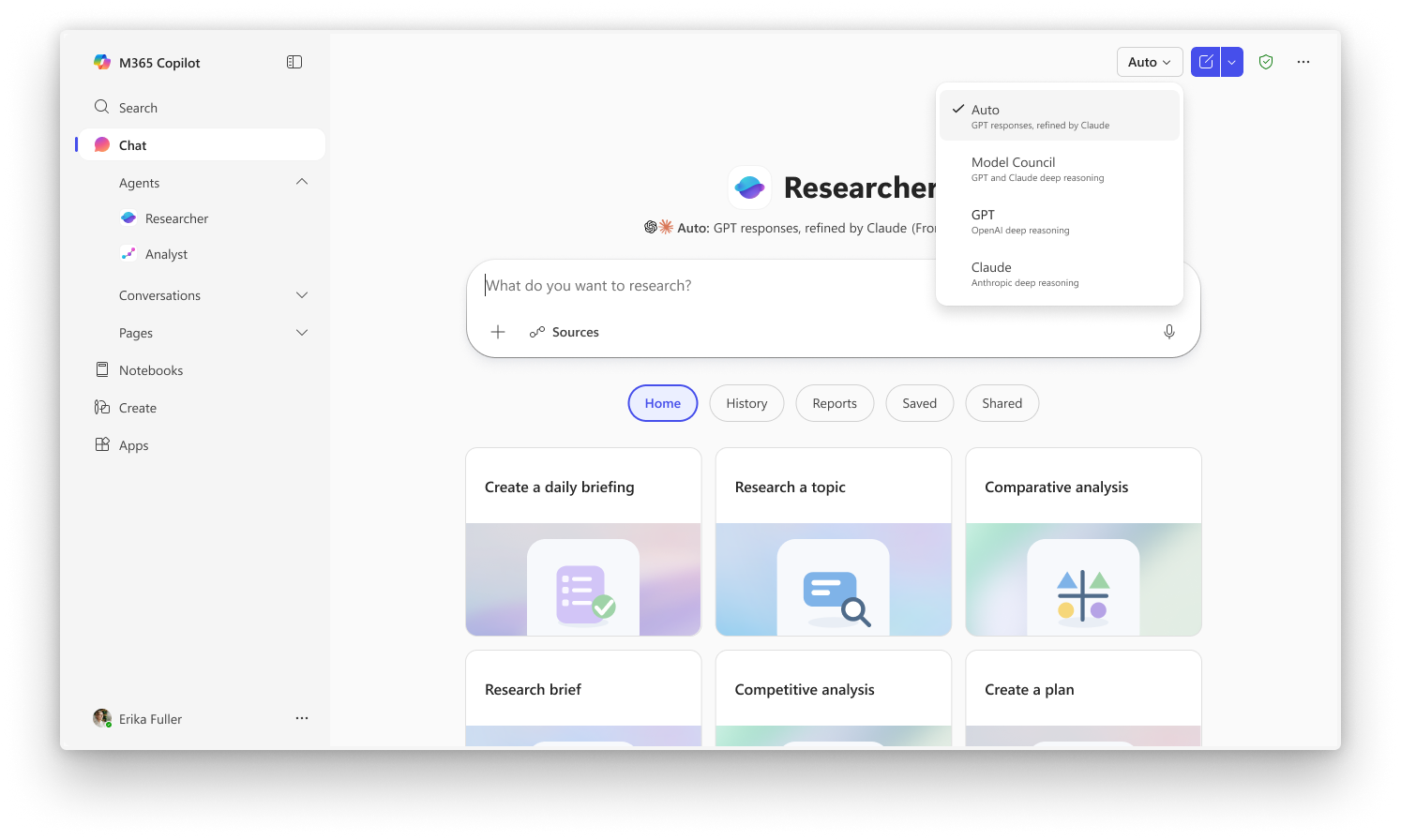

In a move aimed at simplifying developer access, AWS will integrate the full Anthropic-native Claude platform directly into its ecosystem. This will allow customers to use the Claude experience through their existing AWS accounts without needing separate credentials or billing arrangements, while maintaining standard AWS access controls and monitoring tools.

“Anthropic's commitment to run its large language models on AWS Trainium for the next decade reflects the progress we've made together on custom silicon,” said Andy Jassy. “We will continue delivering the technology and infrastructure our customers need to build with generative AI.”

The deepened alliance signals intensifying competition among cloud and AI providers, as companies race to secure both the computing power and strategic partnerships needed to dominate the next phase of AI innovation.